Web Scraping vs Web Crawling: Differences and Use Cases

In the development of the modern internet, data acquisition and utilization have become increasingly important. Whether for market analysis, obtaining news information, or providing data support for scientific research, web scraping and web crawling are two technologies that are often widely used. However, many people confuse the concepts of these two, thinking they are the same technology. In fact, while web scraping and web crawling have similarities, their working principles, application scenarios, and technical details differ. This article will delve into the differences between the two and discuss their respective application scenarios.

Web Crawling

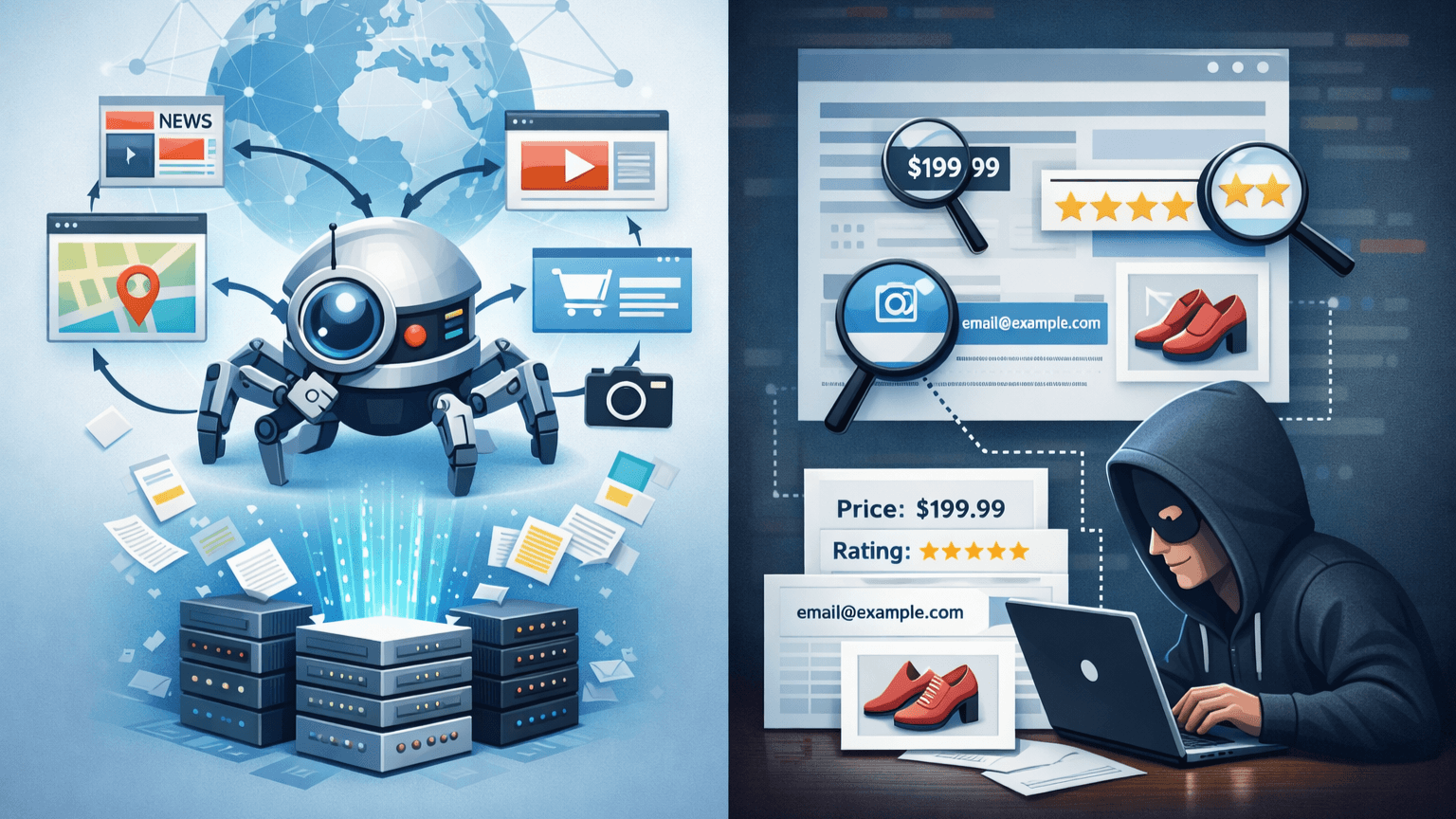

1. What is Web Crawling?

Web crawling, often referred to as a "spider" or "bot," has the core task of discovering and indexing. It acts like an explorer navigating through the maze of the internet, starting from one webpage and jumping to another by clicking links on the page, repeating the cycle.

2. How Crawlers Work

Crawlers do not care about specific tables or prices; they are more concerned with structure and relationships.

Seed URL: Starts from a given URL.

Extract Links: Identifies all hyperlinks on the page.

Update Index: Records newly discovered pages.

Follow Protocols: Professional crawlers prioritize reading the robots.txt file of the website to confirm which areas are allowed for access.

3. Representative Cases

Search Engines (Google, Bing, Baidu): This is the grandest application of crawlers. They continuously crawl to ensure the timeliness of search results.

Website Health Check: Automatically checks if there are dead links (404 errors) on the website.

Web Scraping

1. What is Web Scraping?

Web scraping is the process of extracting specific data. If crawling is like mapping a forest, scraping is directly going to a specific tree to pick that particular fruit.

2. How Scraping Works

Scrapers are usually customized for specific target webpages.

Parse HTML: By parsing the source code of the webpage (using XPath, CSS Selector, etc.), accurately locate the required data.

Data Cleaning: Converts unstructured webpage content into a structured format (such as JSON, CSV, or Excel).

Storage: Stores extracted phone numbers, product prices, or comments in a database.

3. Representative Cases

Price Monitoring: Scrapes product prices from Amazon or other e-commerce platforms for bidding strategies.

Sentiment Analysis: Scrapes posts with specific keywords from social media to analyze public sentiment.

In-Depth Comparison: Scraping vs Crawling

To clearly illustrate the differences between the two, we can compare them in the table below:

| Dimension | Web Crawling | Web Scraping |

|---|---|---|

| Core Purpose | Discover, index, search, map | Extract, transform, store, analyze data |

| Breadth vs Depth | Breadth-first, spanning millions of domains | Depth-first, focusing on specific pages or fields |

| Technical Focus | Link extraction, deduplication, following robots.txt | HTML parsing, anti-scraping strategies, data cleaning |

| Result Format | Index database (Search Index) | Structured files (CSV, JSON, SQL) |

| Typical Tools | Apache Nutch, Scrapy (bulk mode) | Beautiful Soup, Selenium, Puppeteer |

How Do They Work Together?

In large-scale projects, scraping and crawling often work as a "golden pair."

Imagine you are building a nationwide real estate analysis platform:

Crawling Phase: You write a crawler that jumps across major real estate agency websites, collecting the URLs of all property detail pages and storing these URLs in a queue.

Scraping Phase: You design a scraper for these detail pages, specifically extracting "price," "square meters," "location," and "year built" from each page.

Enhancing Scraping Efficiency and Bypassing Blocks

1. Using Proxies in Web Crawling

When crawlers scrape pages extensively on the internet, the target website may identify abnormal traffic due to frequent requests and block the IP, causing the scraping process to halt. In such cases, using proxies can effectively resolve this issue.

IP Rotation: By managing a proxy pool, crawlers can continuously change IP addresses to avoid being identified as abnormal traffic by the target website. A proxy pool is a collection of numerous proxy IPs from which crawlers can randomly select IPs for requests.

Bypassing IP Blocks: Some websites set IP blocking policies based on access frequency and the source of IP addresses. By using proxies, crawlers can bypass these restrictions for seamless scraping.

Regional and Language Customization: Proxy servers can provide IP addresses from different regions, which is crucial for scraping tasks that require specific regional content. For example, when obtaining product price information from the US, a US proxy IP can be used to simulate local user access.

2. Using Proxies in Web Scraping

Similar to web crawling, web scraping also needs to leverage proxies to bypass anti-scraping measures on some websites. Especially when scraping large e-commerce platforms, social media, or news websites, frequent requests may lead to account bans or IP blocks. Therefore, proxies can ensure the continuity and stability of data extraction.

Preventing IP Bans: When the volume of data on the scraping platform is very large, using proxies can avoid being banned by the target website due to high-frequency requests.

Avoiding Anti-Scraping Strategies: Some websites identify automated scraping behavior by detecting IP, User-Agent, Cookie, and other information. Using proxies can reduce the risk of being recognized as a crawler by dynamically changing IPs and simulating real user access.

Application Scenarios

1. Real-Time Price Adjustment in E-Commerce

E-commerce giants scrape competitors' inventory and prices to implement automatic price adjustments using algorithms. This requires extremely high frequency and anti-blocking capabilities, often involving the use of proxy IPs.

2. Machine Learning and AI Training

Current LLMs (Large Language Models) like GPT-4 rely on large-scale web crawling. They scrape vast amounts of text from Wikipedia, academic papers, news reports, etc., providing learning material for the models.

3. Financial Investment and Credit Assessment

Hedge funds scrape sales data or logistics information from retailers to predict financial performance. Banks may scrape publicly available litigation information about companies for risk control assessments.

Legal and Ethical: The Untouchable Red Line

Whether crawling or scraping, operations must be conducted within the legal framework.

Copyright and Ownership: Although data is public, large-scale scraping and commercialization may infringe on database ownership.

Privacy Protection: Scraping data involving personal privacy (PII) is strictly prohibited, such as unauthorized personal ID numbers, private chat records, etc.

Server Load: Excessive scraping frequency is equivalent to a DDoS attack, which can cause the target server to crash.

Conclusion

Now you understand the differences and applications of web crawlers and web scraping.

IPDeep provides high-quality proxy IPs for web crawling and web scraping, including:

and various other types of proxies, with over 10 million high-quality IP resources covering more than 200 countries and regions worldwide. Create an account now to try our proxy services for free!